If it was not part of your daily or weekly routines to take part in a video conference before the outbreak of COVID-19, it most likely is now. During a video conference it is crucial that the picture and sound travel across the globe as fast as they can; it is less important that every single image frame and every millisecond of sound arrives to the recipient’s machine. As long as most of them do, the video and sound streams work just fine.

During a video conference the video conference application on your machine and the video conference applications on the other participant’s computers need to be able to communicate with each other. To transfer video and sound to the other machines, the video conference application needs to transport the information. To do this, it uses a transport layer protocol.

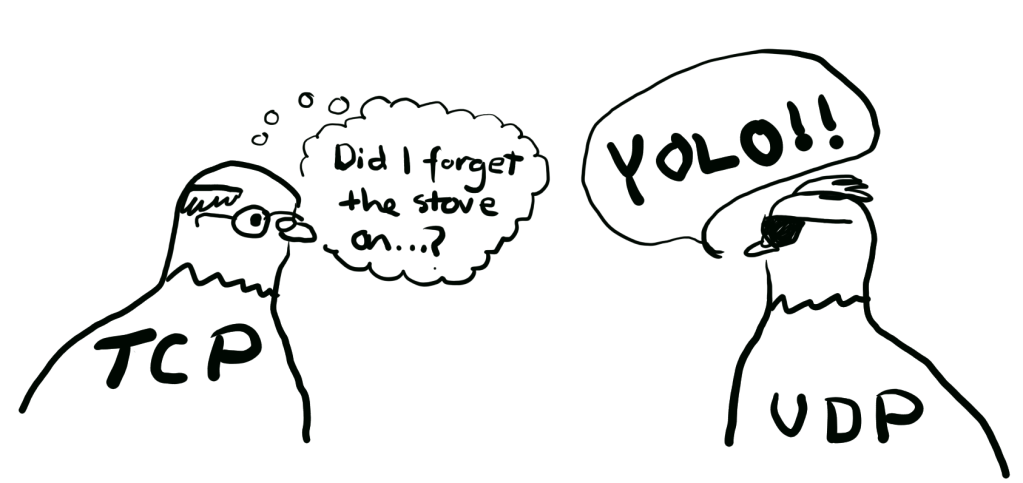

This post is a sister post to Oversimplifying the Internet: TCP. TCP is one of the two most used transport layer protocols in the internet. It definitely has its merits, especially related to its ability to make sure that all information arrives at the destination. This comes at the cost of slight latency due to the handshake procedure and occasional need to re-transmit missing packets. In our example of a video conference, however, we specifically did not require that each packet arrives at the destination – all we were interested in was that there is as little latency as possible in delivering the packets. Thus, we present the second of the two most used transport layer protocols: UDP.

TCP and UDP are by far the most utilised transport protocols in the Internet, which is why each of them deserves its own post. They are, however, on quite different levels in regards of complexity, which you will soon notice if you have read the previous post on TCP. And, for easier visualisation, we will again use our trusted carrier pigeons to transfer our data packets, with screen captures from Wireshark of what the information actually looks like!

UDP

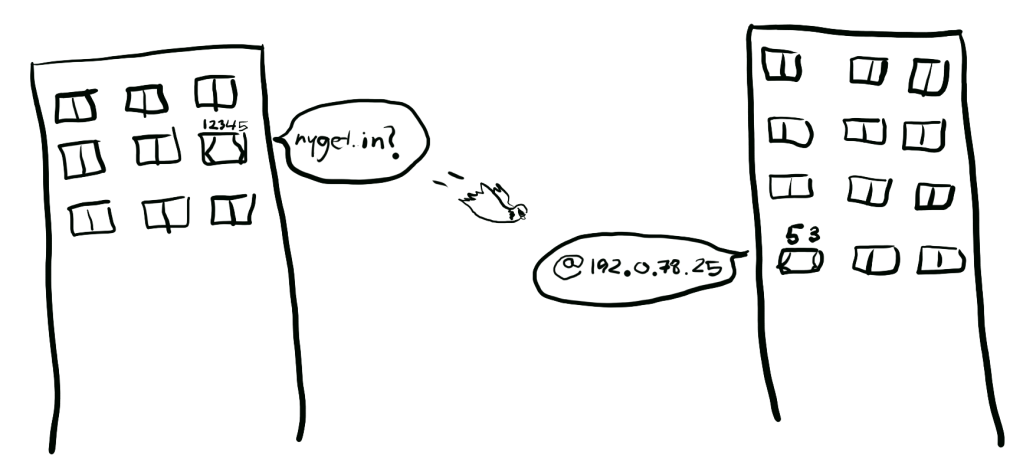

Let us begin with a new term: UDP “packets” are not called packets, but they are called datagrams, so we will use that from here on. Unlike with TCP, UDP does not have a concept of a connection. There is no beginning or end. But similar to TCP packets, UDP datagrams have source and destination ports. When one endpoint sends an UDP datagram to a second one, then the second endpoint can reply to it by sending an UDP datagram back to the port which the first endpoint sent its packet from. Again, you can visualise the two computers as apartment buildings: the pigeon needs to know which window to deliver the message to.

In addition to the ports, a UDP datagram specifies the size of the data it is transporting, and a checksum of the content, which can be used to verify that the data has not changed while it was being transported. (Note that all of this information is also included in a TCP packet!) And that is it. That is all there is to UDP.

Remember that UDP is only a transport protocol. It transports information between two applications. What ever happens on the application layer is dictated by the application layer protocol that the application uses. One example of an application layer protocol which is often transported over UDP is DNS, which was explored in What happens when you browse to nyget.in.

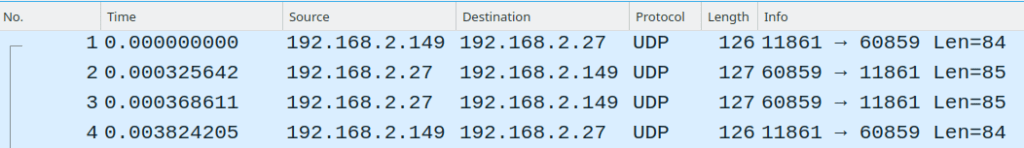

So, let us look at a few UDP datagrams being transported between two clients. We will now revisit DNS, but this time we will ignore what happens on the application layer (the actual DNS queries and responses) and instead focus on UDP. We will skip the checksums, as we did not focus on them with TCP either.

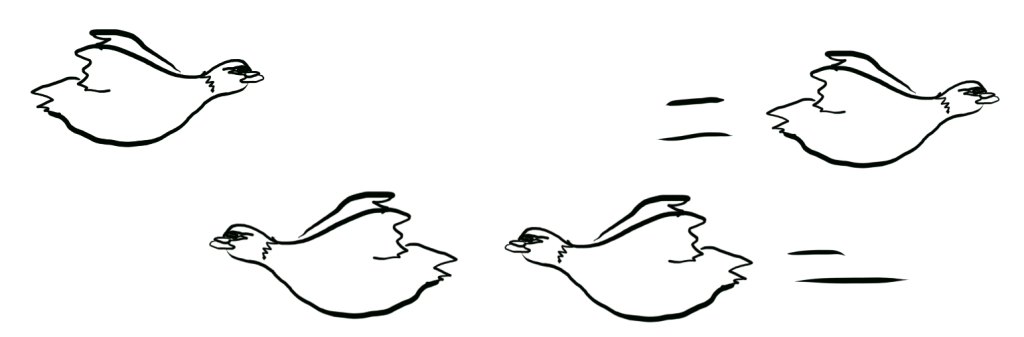

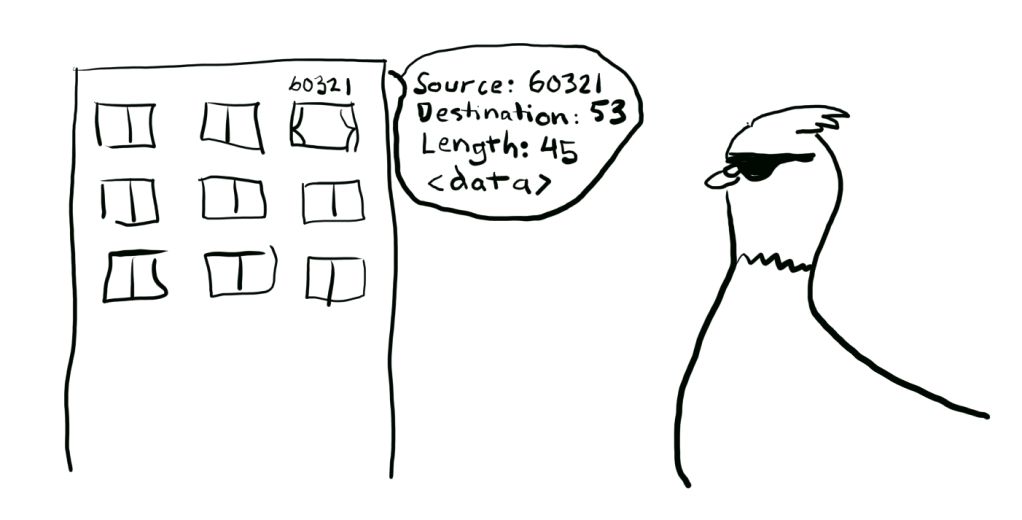

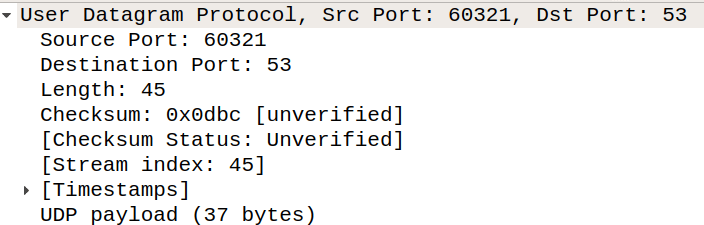

The UDP port 53 is registered for DNS, so that is the server’s UDP port the client sends its UDP packet to. The client selects 60321 as its source port. The size of the UDP datagram is 45 – this includes 8 bytes of the UDP headers, and 37 bytes of payload. The pigeon is sent on its way.

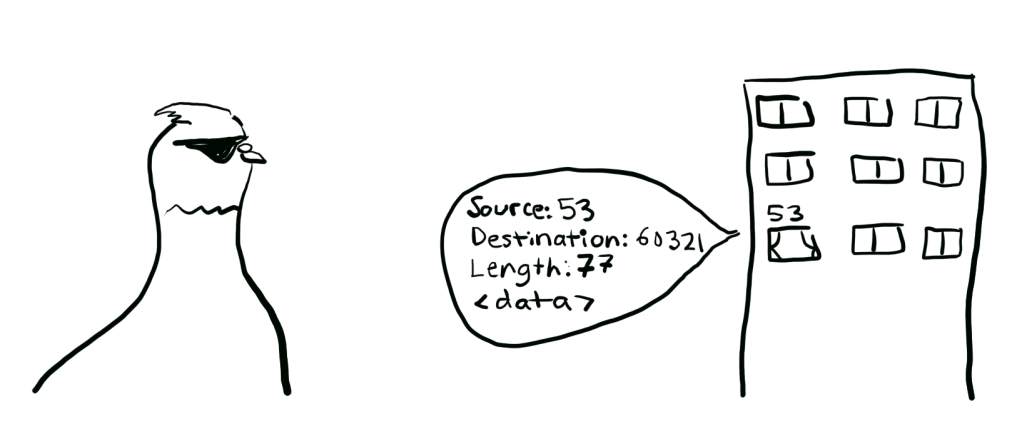

The pigeon reaches the server and delivers the UDP datagram to the open port 53. The server replies to the UDP datagram with 77 bytes – which again includes 8 bytes of UDP header and 69 bytes of payload. It sends the datagram back to the client’s port 60321, with the source port being 53.

The pigeon gets back to the client and delivers the server reply. This finishes the communication between the client and the server. One message out and one message in.

On the application layer, the DNS client sent a request asking where “nyget.in” is located at, and the server replied with the IP address of nyget.in. In this example we saw one benefit of UDP, which is the minimal amount of packets that were needed for the information to get across. We had the pigeon fly once to the server to deliver the query, and then back to deliver the response. If the same was done with TCP, there would have been many more packets flying, as there would have first been a need for the 3-way handshake, then the transfer of the query and response, and then the connection would have to have been closed.

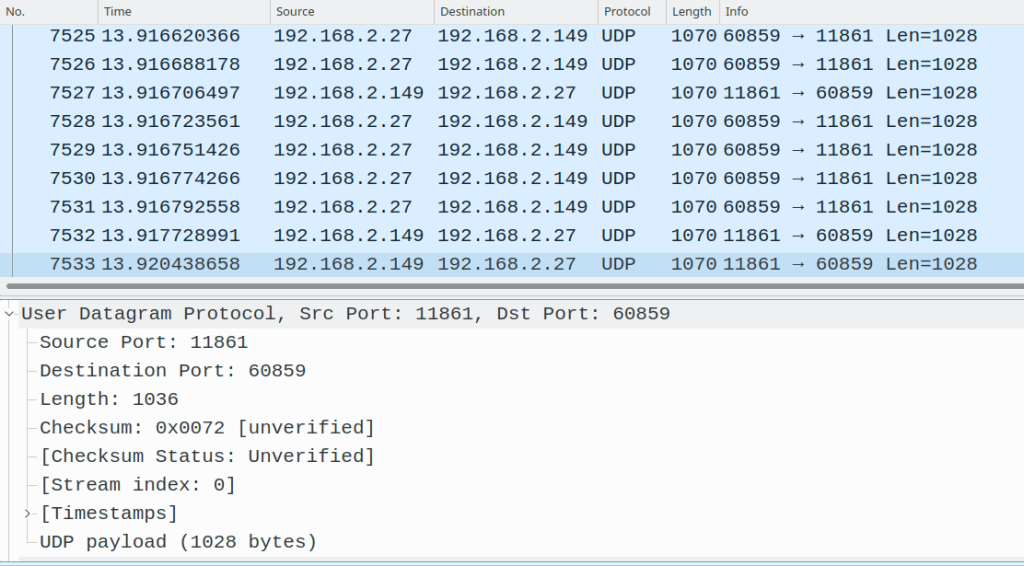

Here is another example of UDP being used: an ongoing Zoom video conference. Both sides have their cameras on, so there is a constant flow of pigeons between the endpoints. Each pigeon is transporting a UDP datagram containing a small fragment of the video to the other side.

As seen in the examples, UDP has two merits: with short interactions it requires considerably less packets to be transported between the endpoints, and with long interactions the datagrams can keep flowing without caring whether the other datagrams have reached the destination or not. With TCP, you might not notice it if one or two packets were lost and needed to be re-transmitted. However, the latency starts piling up when a lot of packets get lost in the way – and it is very common that packets get lost in the internet, especially if mobile connections are involved. The downside is that there is no way in UDP to verify that a datagram has reached the other endpoint or not.

It is notable, however, that nothing prevents the next layer protocol from implementing a handshake or knowledge of missing packets on top of UDP. And that is exactly what has happened. QUIC is a fairly recent addition to the protocol family, originally specified by Google, and still being officially drafted by IETF. QUIC is a connection-oriented transport layer protocol which works over UDP and thus gains the reduced latency of UDP, but brings many of the reliability features from TCP with it. QUIC is quickly gaining popularity, with all major web browsers already supporting it, despite it still being drafted. QUIC definitely deserves its own post, which means that we will hear more of it later.

Thank you again for reading, and see you next time!